Where Does AI Meaningfully Fit Into Curriculum And Assessment?

contributed by Dr. Athena Stanley

Artificial intelligence is increasingly present in education conversations. Some teachers are experimenting with it. Others are cautious. Many are simply unsure where it belongs or whether it belongs at all.

A recent Gallup poll found that three in ten teachers use AI weekly, with findings indicating improvements in the quality of certain tasks. The study also estimated that AI-supported work could amount to the equivalent of approximately six weeks of time saved over the course of a year.

Meanwhile, a RAND study found that more than half of students and teachers report already using AI in school contexts, even as formal guidance and policy have struggled to keep pace.

Amid concerns about plagiarism, bias, and the potential impact on students’ critical thinking skills, uncertainty is understandable. The question, then, may not be whether AI exists in education, but where it meaningfully fits within curriculum and assessment.

In some classrooms or contexts, integration may be limited in scope and highly intentional, emphasizing critical examination rather than routine or active use.

Several instructional domains offer starting points for this reflection. Rather than positioning AI as a solution or a threat, educators might consider how, and whether, it aligns with their instructional goals, assessment practices, and professional values.

1. Curriculum Planning and Lesson Design

Curriculum planning is one area where AI may intersect with teacher workflow, particularly during early stages of lesson design or brainstorming. Teachers may feel overwhelmed by the task, have too many ideas competing for attention, or be looking for ways to refresh familiar approaches. AI may help ease this “blank page” pressure by offering general overviews or serving as a brainstorming partner.

AI may also support more specific elements of lesson and unit planning, such as identifying alignment between objectives, assessments, and rubrics. In addition, it may help teachers organize ideas for difficult communications, turning lists of notes or journal-style reflections into clear, professional messages.

For example, teachers might revise a unit overview by asking AI to outline what to keep or cut based on specific criteria, such as eliminating redundancy to fit a shorter timeframe or incorporating a new instructional approach their school is implementing.

2. Assessment and Feedback Workflows

Assessment design and feedback are central to instructional practice, and these processes may be areas where AI tools intersect with teacher decision-making. Teachers’ time is valuable, and teaching requires constant attentiveness and flexibility. Routine tasks such as writing instructions, developing feedback starters, planning discussion prompts, or designing rubrics often take more time than is available during the school day, frequently spilling into evenings and weekends.

AI may help by handling some low-risk, low-stakes production tasks, such as converting notes into a rubric or generating draft feedback language. This may reduce drafting time, potentially freeing space for instructional decision-making and student interaction. The usefulness of AI-generated drafts often depends on the specificity and context teachers provide through their prompts. By setting clear parameters and describing learning levels, class culture, and intended outcomes, teachers may spend minutes refining a draft rather than hours starting from scratch.

3. Differentiation and Accessibility

Differentiation remains one of the most complex and essential aspects of teaching. Meeting students where they are while providing an appropriate level of challenge requires time, flexibility, and thoughtful planning. AI tools may offer support in generating varied examples, scaffolds, or alternative explanations that teachers can adapt to meet diverse learning needs.

Differentiating instruction is time-consuming, and limited planning capacity might stand in the way of even the most skilled teachers. AI may support more continual and responsive differentiation by generating materials for teacher review and refinement in a matter of minutes.

For instance, AI could produce releveled texts, generate variations of practice questions, or offer multiple explanations of the same concept. It could also create supportive add-ons, such as lists of potentially challenging vocabulary that students could preview before instruction and reference during lessons. When thoughtfully used, these supports may contribute to more equitable access to learning.

4. Digital Literacy and Evaluation

Beyond instructional workflow, AI may present an opportunity to strengthen students’ digital literacy and evaluation skills. Students of all ages already encounter AI in their daily lives, often through the people and digital platforms around them. Teachers who model responsible AI use could help students develop essential digital literacy skills.

Ethical and thoughtful AI use becomes a powerful teachable moment when teachers focus on evaluating AI-generated output, drawing on background knowledge, cross-referencing sources, spotting inaccuracies, and asking better questions. This approach helps students build confidence and develop a toolkit for using AI appropriately, without allowing it to replace their own thinking.

5. Professional Growth and Future Readiness

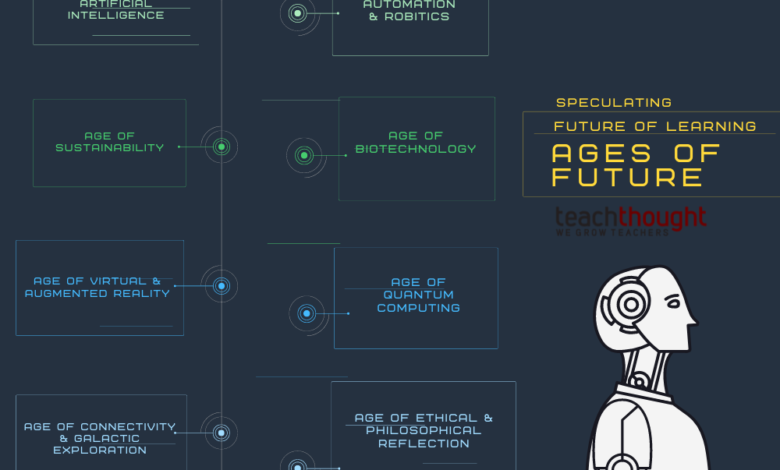

As AI continues to evolve, education faces the challenge of balancing present instructional goals with preparing learners to navigate emerging technologies responsibly. In some contexts, preparing students for college and careers may include helping them understand how to work thoughtfully alongside AI tools.

As AI becomes more widespread, teacher familiarity may help maintain relevance across subject areas, from STEM and language arts to social studies and the creative fields. Importantly, using AI does not replace a teacher’s expertise; it amplifies it.

Responsible Use

Where AI is incorporated into curriculum or assessment, clear expectations around ownership, integrity, and boundaries are essential. AI may assist instructional planning or student work, but educators must continue to uphold standards of academic honesty, transparency, and privacy.

Students could be encouraged to disclose when AI tools have supported their thinking and to explain how those tools were used. Teachers might model responsible practice by avoiding the entry of personal or identifying information into AI systems and by openly discussing limitations such as bias and inaccuracy. Engaging students in examining these limitations may strengthen evaluative skills and reinforce the role of human judgment. AI is imperfect, and learning to question its outputs may be one of the most valuable lessons it offers.

Conclusion

Rather than asking whether AI should be used in classrooms, educators might begin by asking where it meaningfully aligns with their curriculum, assessment practices, and professional values. Thoughtful integration requires clarity, boundaries, and ongoing reflection. When teachers approach AI not as a shortcut or mandate, but as a consideration within their broader instructional design, they retain what matters most: professional judgment.

In that space of intentionality, AI becomes not a disruption, but a decision.

Dr. Athena Stanley, a Marquette native and proud Yooper, holds a Ph.D. in Curriculum, Instruction, and the Science of Learning from the University at Buffalo (2018), and an M.A.E. in Instruction (2013) and B.A. in Elementary Education (2010) from Northern Michigan University (NMU). Dr. Stanley is the Founder and CEO of Athena Global Learning (AGL). A former NMU assistant professor in Applied Workplace Leadership, she is in her 16th year in education and draws on 14 years of international teaching, curriculum development, and leadership experience in Ecuador, Turkey, and China. She holds a Michigan Teaching Certificate and Harvard’s School Leadership and Management Certificate.

Source link